While a graduate student at the University of Chicago, I taught an introductory statistics class, mostly to economics majors. The most memorable and long-lasting lesson of that class came during a lecture on the St. Petersburg paradox.

We play the following game. Toss a fair coin indefinitely. The amount I owe you starts at $1 and doubles every time a head occurs. The game ends, and I pay what I owe, after the first tail occurs. For example, if the outcomes are heads, heads, heads, tails, then I’ll owe you 2 x 2 x 2 = $8. If the first outcome is a tail then I owe you $1. And so on. How much would you pay to play this game? (More on this question below.)

I illustrated this in a class demonstration in which a student offered to pay $5 in exchange for whatever random payout resulted from the game. (Before we played, we both agreed that I would pay out a maximum of $512, which would only occur if 9 or more heads were tossed in a row, less than 0.2% of the time.)

Long story short, I tossed 8 heads in a row, forcing me to pay $251 ($256 minus the $5 stake).

The example offers two important lessons: one conventional lesson on risk and another practical lesson. In the years since, the practical lesson has proven to be worth much more than the $251 it cost me. I’ll discuss the conventional lesson first.

The Conventional (Technical) Lesson: Expectation isn’t everything

In games of chance, a common way to compute the fair value of a game is by expected value. In a single toss of a fair coin (50% heads/50% tails) in which I win $10 if heads and $4 if tails, the expected value is 50% x $10 + 50% x $4 = $7, meaning that if I pay $7 to play then neither side expects to profit in the long run. If I pay less than $7, I expect to profit long-term; and if I pay more than $7, I expect to lose long-term.

In the St. Petersburg game, the expected value of all payouts is infinite. (More information on this calculation and the history of the game is available here.) So by the above logic, a person playing the game can expect an infinite long-term profit no matter how much they pay to play. If I pay $100 to play, my expectation is infinity - 100 = infinity. If I pay $10,000 to play, my expectation is still infinity - $10,000 = infinity. And so on for any finite value.

So the above theory suggests that it is profitable to pay any finite value to play the game, but the paradox arises by observing how much people are actually willing to play. Anecdotally, $5 and $20 seem to be the most popular choices for how much someone is willing to pay when asked.

It’s an old problem with a number of proposed solutions that I won’t discuss in much detail here. (Wikipedia gives a good overview with references.) Most of solutions are cloaked in complicated frameworks and arcane technical concepts, such as expected utility theory and ergodicity, offering stylized explanations that still fail to identify the root cause of the paradox.

The easiest way to understand the discrepancy between the theoretical and practical answers is by reading the definition of expected value carefully: expected value is a long-run average that is realized only if a game/situation can be repeated an indefinite number of times.

The “infinite” expected value of the St. Petersburg game can only be realized if one could (in principle) play the game a sufficiently large number of times in order to realize that value.

I say “in principle” above because it is not necessary that we actually play a game indefinitely for it to be a good bet. The only requirement is that we could play the game indefinitely, if we were given the opportunity to do so. Two possible limitations that would prevent one from meeting this requirement are time and money. So, for example, if the game costs $100 to play and I only have a net worth of $100, then I face the possibility of going broke after one round and being unable to play additional times to get my money back. This consideration is missing from the initial discussion of the St. Petersburg paradox.

The amount one is willing to pay, therefore, should depend not only on the expected value of the game but also on how much one can afford to pay in order to guarantee (or nearly guarantee) that they can play the game long enough to realize its expected value.

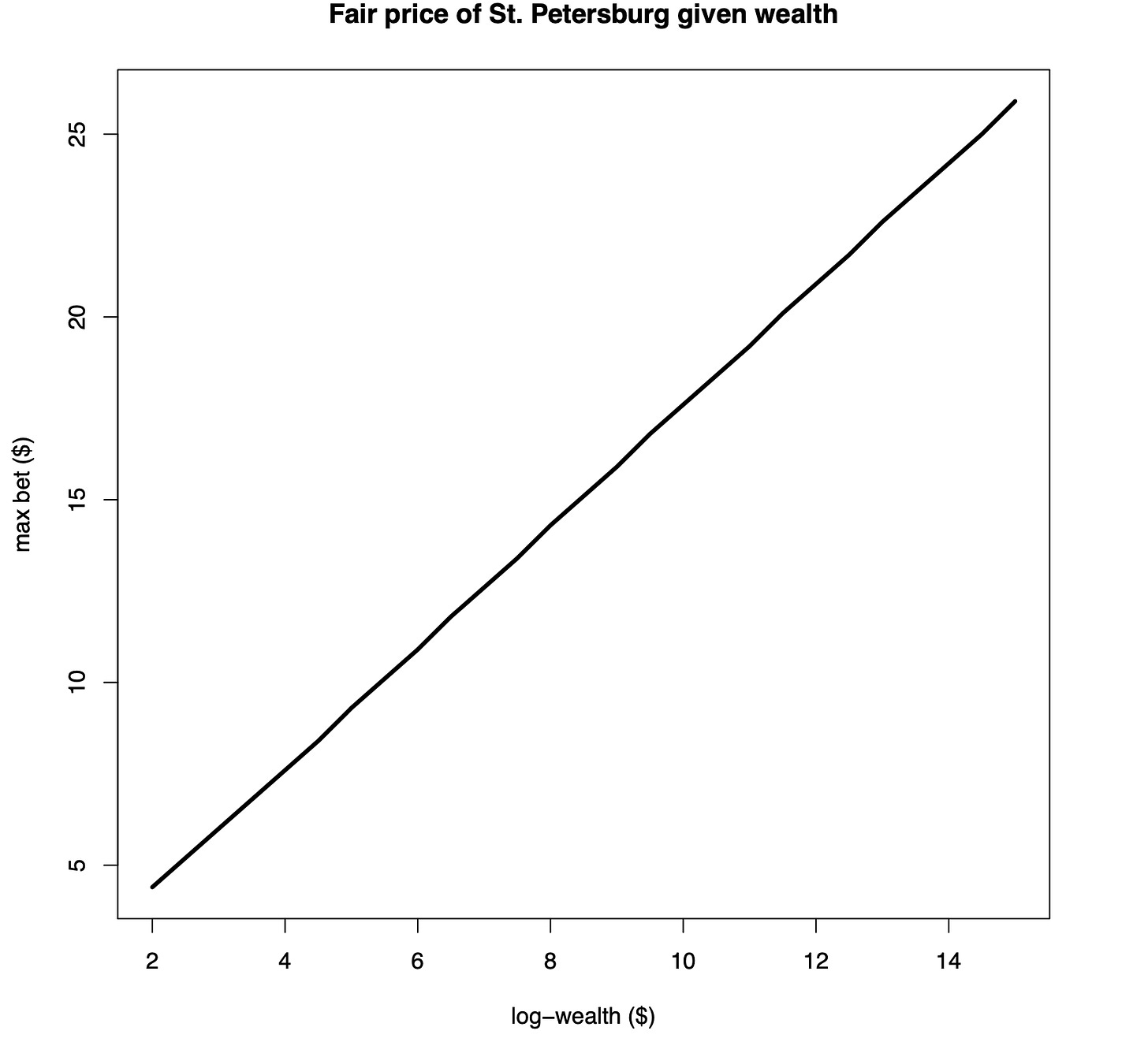

This is why risk of ruin, not just expected value, is a critical consideration of any risky proposition. When factoring in risk of ruin, the amount one is willing to pay for the St. Petersburg game varies depending on wealth.

The risk of ruin concept is incorporated, though not directly, into Peters’s ergodicity solution to the St. Petersburg game, shown in the above plot. Under this framework, someone should only be willing to risk up to $20 if they have significant wealth, whereas $5 is a reasonable amount for the level of wealth of most people.

On its own, this is a valuable idea to internalize, as the concept of “positive expected value” is often presented in gambling and risk contexts as a be-all, end-all, without discussion of risk of ruin.

But the much more valuable lesson of my in-class demonstration was the practical lesson about what other people’s low expectations (of getting paid) say about their own attitude toward risk and loss.

The Practical Lesson: Expectation tells you everything

The technical lesson of the St. Petersburg paradox is that expectation (i.e., expected value) doesn’t tell us everything we need to know about a risky proposition. The practical lesson is that one’s expectations (i.e., personal, subjective expectations about a person or situation) tells us a lot about that person, and what we can expect of them if the roles are reversed.

After tossing the 8 heads in a row, I asked the winning student to see me after class and carried on with the rest of the lecture. After class, I handed him the $251 I owed, no questions asked. Both the student and the rest of the class were shocked that I paid the full amount, without saying a word. And I was even more surprised that they were surprised I would pay.

This led me to two realizations that have changed how I approach risk and my understanding of how other people approach risk, loss, and luck in general.

The students’ low expectations reflected either their prior observation of how someone else has reacted in a similar situation or how they would react if put in my same situation. For them, it would be normal (and even acceptable) for someone who gets unlucky to try weaseling out. (A typical professor in my same situation would offer to take the winning student out to lunch instead, or would avoid paying entirely by claiming it was “just a demonstration”.)

These low expectations highlight another important practical element of the St. Petersburg paradox that applies in other contexts: the expected value of a proposition should factor in not only the theoretical expected value (under optimal conditions and assuming perfect knowledge about the game) but also the practical expected value (such as how likely it is to get paid if one were to win).

If the student didn’t expect me to pay out above a certain amount, then this should have been reflected in the decision of how much to pay. As it was, I had already capped my losses at $512, an easy thing to gloss but reduces the infinite expected value of the St. Petersburg game all the way to $5.50, making the initial $5 stake slightly positive for the student (and slightly negative for me).

But none of this matters if I don’t pay what I owe.

Love this! One's bankroll management technique should include this "practical expected value" based on the trustworthiness of the entity you're betting against. My personal lesson on this was that in 2013 I bet notorious climate science denier Pat Michaels $250 (an amount he mocked as being almost too small to bother with) that we would have statistically significant warming (at the 95% confidence level) in the HadCRUTx data for the 25 year period starting in 1997.

https://www.drroyspencer.com/2013/09/pat-michaels-bets-on-25-years-of-no-warming/

I won, of course, and Pat was supposed to donate the $250 to the Climate Science Legal Defense Fund. I never got word that he paid. I did manage to contact a secretary of his who was quite rude when she told me that she did pass my message along to him, and that she would not respond to me further. So I think he welched.

And then he died.

So long, asshole.

https://www.nytimes.com/2022/07/22/climate/patrick-j-michaels-dead.html

-------

SS